5/100: Brain-to-Brain Interfaces - Upload Yourself

Or, What I Mean When I Say Megabrain

Disclaimer: Right off the bat, IANABS (the BS in this case stands for Brain Scientist). This is all research, reading, and attempting to understand. Here be dragons!

My educational background is in Computer Science and Psychology, or Human-Computer Interaction. In the real world™, this led to an interest and a career in User Experience Design.

However, I’m rather fascinated by futuristic concepts like Neurotechnology and AI; so much so that I’ve made Neurotech one of the focus areas of this sabbatical. For the last 5 days (and the next 95), I’m exploring an idea I had back in 2014, which I’ve since been affectionately referring to as “Megabrain”.

The Concept

To convey the thought behind Megabrain, I’d like you to visualize something for me. I want you to think back to a moment in your life when you felt truly connected to another human being. Totally on the same wavelength. This could have been a friend, a date, a child, or a stranger – and it could have been in joy or in sorrow. Got one?

Now imagine multiplying this connection by approximately 7 billion, and imagine having it on 24/7. It sounds a tad overwhelming, and it probably would be, unless appropriate throttling or selection mechanisms are put in place. However, if you were to invite a human from 1920 into our lives today, they’d probably be overwhelmed just by looking at their brand new iPhone!

So if we could suspend disbelief for a second, let’s first talk about the kinds of problems we could solve with this mechanism – then, about the problems we could introduce.

Problems

Wasted Time

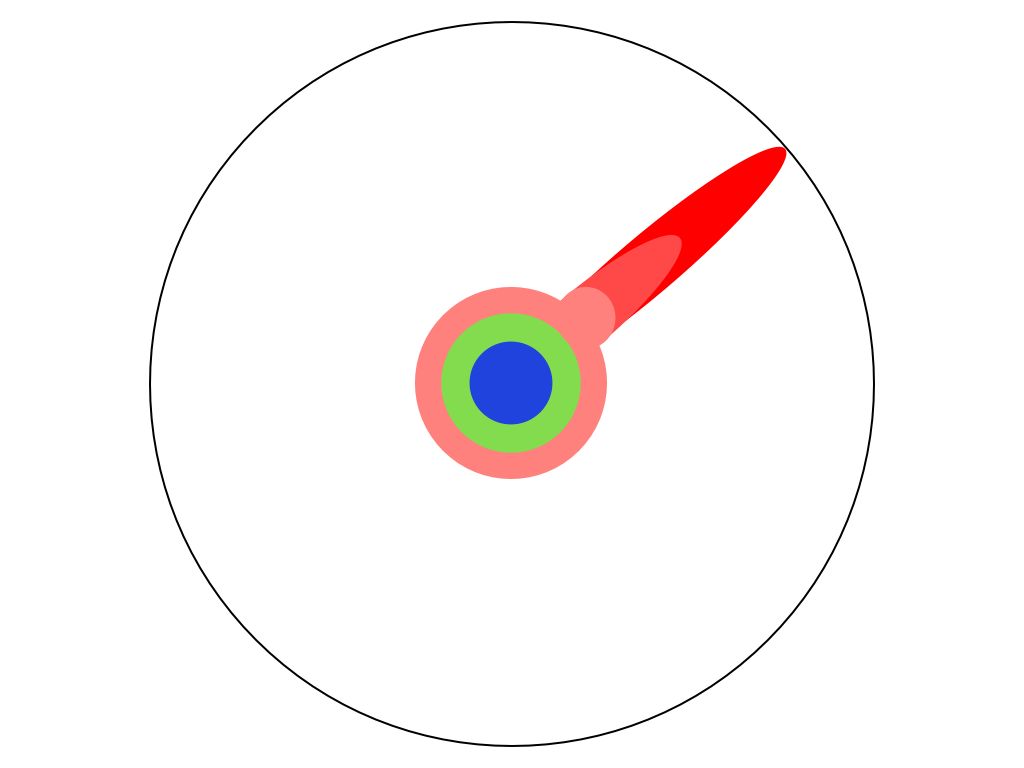

We spend large portions of our lives learning things someone else already knows, as per this helpful visualization of acquiring a PhD.

If the white circle is all human knowledge, the blue portion indicates stuff you learn in elementary school, green in high school, light red through your specialized Bachelor’s degree, medium red during your Master’s, and deep red by reading all existing research material in your slice of your field. Then finally – finally! – you’re ready to push past the boundary of all of humankind’s wisdom, and actually create or discover something new.

What if we could instead plug into a brain-net consisting of all existing learning and knowledge at a ripe, young, productive age? We could become immediately beneficial to the world, and begin creating zits – I meant dents – in the surface of humankind’s understanding of all the things. This would also mean we could sustain our ability to contribute longer.

I’d imagine selecting an area of specialization might also become easier, having everyone’s collective experience in all spheres behind you.

Empathy Gaps

A lot has been said and written (and at least one lovely video produced) about the value of the ability to put yourself in another’s shoes. Today, one of the best ways to convey one’s situation is by telling a story about it – through different media, like conversation, writing, etc. We’re normally really good at empathizing with storytellers, too – studies show that two people’s brains will light up in the same areas on fMRIs when one tells a story and the other listens, or when two people listen to the same story; it’s almost as if they live each other’s experiences.

So imagine now if every person that was out to harm another could instantly feel that other’s story? Live their lives, understand their struggles, experience their happiness? Or, if we felt so connected to each other that causing pain to another person was like causing pain to ourselves, a concept explored by Buddhists on their path to Enlightenment? Seems like it put to rest a whole lot of conflict.

Enter Megabrain, Er, Brain-to-Brain Interfaces

Could it work? Turns out, the technology that might enable this already exists. It’s easiest to understand if taken in the following parts: given we’re seeking brain-to-brain (input/output) interfaces, we’ll look for brain-to-computer (input) interfaces first, then for computer-to-brain interfaces (output), then see if we can put them together.

Brain-to-Computer Interfaces

While some approaches to creating these connections are invasive, requiring surgery (for example, neural implants, used primarily for treating pathologies), there are a few that are non-invasive and available to consumers. I even own one!

Image via InteraXon, the company behind the Muse brain-sensing headband

It’s EEG-based, which means it works by detecting the electrical activity of our brainwaves. While InteraXon markets the Muse mainly as a meditation aid, they also provide a developer kit which I cannot wait to dig into – stay tuned for a post on that!

There are many other brain-to-computer interfaces, so if you’d like more detail, start with Wikipedia and go from there.

Computer-to-Brain Interfaces

Now that we’ve gotten some information to the computer, how do we transmit it back to a human brain? That part of the process seems more involved, as per this article in Extreme Tech:

For the CBI, which requires a more involved setup, a transcranial magnetic stimulation (TMS) rig was used. TMS is somewhat similar to TDCS, in that it can stimulate regions of neurons in your brain — but instead of electrical current, it uses magnetism. The important thing is that TMS is non-invasive — it can stimulate your brain (and thus cause you to think or feel a certain way) without having to actually cut into your brain and use some electrodes.

So, still non-invasive, but slightly more difficult to use. There are probable many other ways, which I haven’t found yet.

Brain-to-Brain Interfaces

Putting together brain-to-computer and computer-to-brain interfaces gets us – you guessed it – brain-to-brain interfaces, known colloquially as tele-freaking-pathy. Interestingly, researchers have already demonstrated a primitive version of this working. Here’s a diagram of the set-up:

And here’s how it actually works end-to-end:

The BCI reads the sender’s thoughts — in this case, the sender thinks about moving his or her hands or feet. Thinking about feet is equivalent to binary 0, while hands is binary 1. With a little time/effort, whole words can be encoded as a stream of ones and zeroes. These encoded words are then transmitted (via the internet or some other network) to the recipient, who is wearing a TMS. The TMS is focused on on the recipient’s visual cortex. When the TMS receives a “1” from the sender, it stimulates a region in the visual cortex that produces a phosphene — the phenomenon whereby you see flashes of light, without light actually hitting your retina (when you rub your eyes, for example). The recipient “sees” these phosphenes at the bottom of their visual field. By decoding the flashes — phosphene flash = 1, no phosphene = 0 — the recipient can “read” the word being sent.

Using a similar rig, other researchers have also allowed participants to play a game of Telepathic 20 Questions. Check out this video:

And then there was the time that participants also played a game, but one person could see the screen and the other couldn’t, so the first person would brain-tell the second person when to shoot. Here’s another (albeit nerdy) video:

You know, NBD.

Potential Downsides of Brain-to-Brain Connectivity

This feels like a great topic for another post, so let me just outline a few that came to mind and leave you hanging:

Hacking

Sameness / homogeneity of thought

Lack of freedom of thought

Lack of joy of learning / newness

Technological singularity